Bird audio detection DCASE challenge – less than a week to go

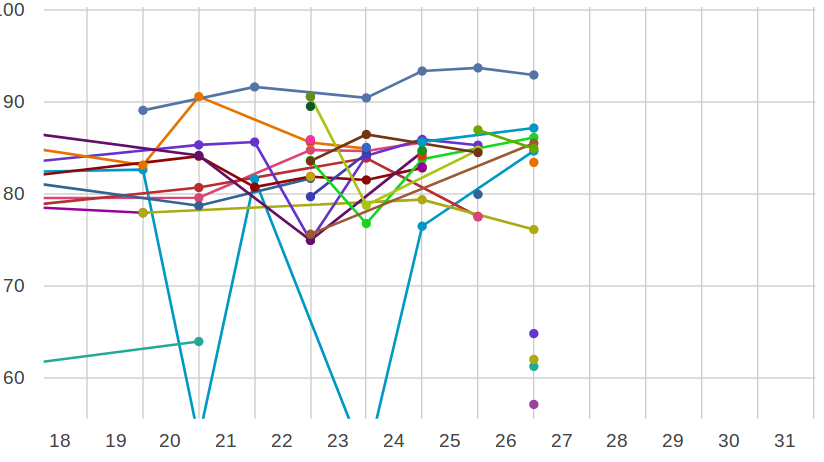

The second Bird Audio Detection challenge, now running as Task 3 in the 2018 DCASE Challenge, has been running all month, and the leaderboard is hotting up!

There’s less than a week to go.

Who will get the strongest results? How well will these leaderboard preview scores predict the final outcome? (Note: the “preview” scores come from only 1000 audio files, from only two of our three evaluation sets. Whose algorithm generalises well?)

Who is this “ML” consistently getting preview scores above 90%? Will this beat the system from “Arjun” which was the first to get past the 90% mark? Will someone get a final score above 90%? Above 95%?

These scores aren’t just for fun, they represent (indirectly) the amount of manual labour saved by an automatic detector. If the AUC score gets twice as close to the 100% mark, this indicates approximately halving the number of false-positive detections that you have to tolerate from your system, which can thus save hundreds of person-hours in data mining, or enable some automation that wasn’t possible before.

Also importantly, what are the advances in the state of the art that are bringing these high-quality results? In the first Bird Audio Detection challenge, which was only last year, the strongest system attained 88.7% AUC – and this time we made the task harder by expanding to more different datasets, and by using a slightly modified scoring measure. (Instead of the overall AUC, we use the harmonic mean of the AUCs obtained with each of the three evaluation datasets. This tends to yield lower scores, especially for systems which do well at some datasets and poorly at others.) Machine learning is moving fast, and it’s not always clear which new developments provide real benefits in practical applications. It’s clear that some innovations have come along since last year, for bird audio detection.

So, we wait with interest to see the technical reports that will be submitted to the DCASE workshop (deadline next week, 31st July!)